Good panel discussion the Deloitte Connect event yesterday: “Why Australia? Conditions that cement us as a regional leader and exporter of AI.”

Thanks to Joana Valente for moderating, and to fellow panellists Paul Grimes Belinda Dennett , and Rianne Van Veldhuizen for a thoughtful conversation.

My contribution focused on a few questions/dimensions that may help frame the discussion.

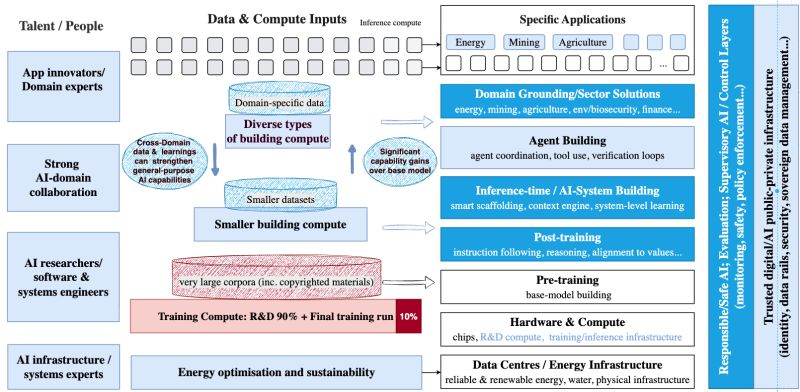

First, it helps to look beyond the simple framing of “build our own LLM” versus “just adopt other people’s AI.” The AI stack is far more nuanced. Between base model pre-training and applications sit many layers of innovation, including post-training (instruction following, reasoning, alignment), inference-time/system-level, agent layer, domain grounding, and supervisory AI/control layers that monitor other AI systems. All of this occurs before we even reach application-specific innovation.

Many of the largest general AI capability gains today are happening in these middle layers. Australia already has strong research strength in post-training methods, domain-grounded AI, and responsible and safe AI systems, where innovation often depends more on scientific talent and domain advantage than on sheer data or compute scale. These capabilities can also strengthen the competitiveness of any future sovereign LLM developed in Australia. Even if such a sovereign model is not the most powerful globally at the base-model level, advantages in post-training, domain grounding and system integration can make it highly competitive at the overall system level.

Second, domain grounding matters. Exposure to complex real-world domains strengthens general-purpose AI capability itself, not just specific applications. Australia has world-leading competitive advantage in energy, minerals, agriculture, env, and biosecurity. Leveraging these domains can create sector-wide innovation ecosystems.

Third, the conversation about AI infrastructure should not stop at hardware compute, data centres, or even the AI stack itself. There is also a crucial trust layer that is not always considered part of core AI. This includes human/AI/org identity systems, mechanisms that unlock sovereign/sensitivity data value while securing them, and other control mechanisms that allow AI systems to operate in trusted environments.

In a world where trust and data sovereignty matter, new models of export may emerge. Some innovations may allow other countries’ data/AI workloads to operate within Australia’s trusted environments, or enable Australia’s trusted AI systems developed here to operate close to sovereign/sensitive data in other countries. The opportunity is not only to export raw compute, but to export trusted AI and trusted AI infrastructure.

The strategic question is which parts of the nuanced AI stack Australia is best positioned to lead in, and ensuring the conditions exist for research and industry to explore those opportunities.

CSIRO/CSIRO’s Data61 is focusing more on the blue bits

About Me

Director/Head of CSIRO’s Data61

Conjoint Professor, CSE UNSW

For other roles, see LinkedIn & Professional activities.

If you’d like to invite me to give a talk, please see here & email liming.zhu@data61.csiro.au

Leave a Reply