Very excited about the work we contributed to the redesign of NSW’s AI Assessment Framework, released last week. It includes several world-leading design elements that reflect a more mature way of thinking about AI risk.

Most AI risk frameworks don’t fail because they miss risks. They fail because they never narrow them in a principled way. This post shares the logic behind a different approach we’ve taken.

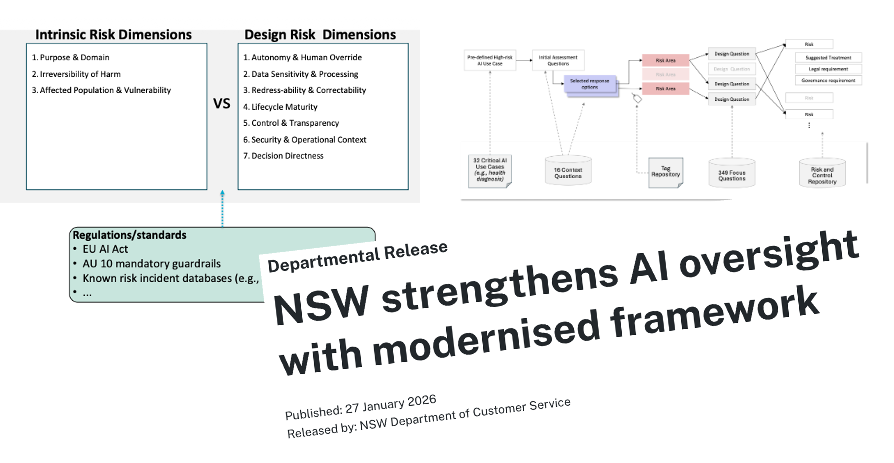

The starting point is a clear separation between intrinsic risk and design-introduced risk. Intrinsic risks exist regardless of whether a use case is delivered by AI, traditional software, or humans, and should largely be governed through existing policy, legal, and sector controls. But even within design risks, many are not uniquely “AI risks” either. Issues such as data handling, access control, cybersecurity, and privacy often sit squarely with established IT, software engineering, and legislative controls.

What remains, once those are stripped away, is a much smaller set of genuinely AI-specific risk characteristics: autonomy, adaptivity, opacity, scale, and feedback effects, often interacting with other non-AI design risks. This filtering, and the careful analysis of interactions behind the scenes, is what collapses thousands of possible risks and controls into a small, defensible set that actually deserves focused attention.

From there, we introduce layered rubrics to deal with uncertainty, edge cases, and false reassurance. These layers are designed to catch what self-assessments often miss, including the comforting fiction of the “perfect human in the loop”. In higher-risk settings, these rubrics must ultimately be backed by evidence that controls are effective, not just declared.

The real question for any organisation is not how many risks you list at the organisational or use-case level, but which risks are genuinely additional because of AI, which ones you are responsible for controlling, and how you know those controls actually work.