This is a slightly delayed post because I was on annual leave when the National AI Centre released 𝗕𝗲𝗶𝗻𝗴 𝗰𝗹𝗲𝗮𝗿 𝗮𝗯𝗼𝘂𝘁 𝗔𝗜 𝗴𝗲𝗻𝗲𝗿𝗮𝘁𝗲𝗱 𝗰𝗼𝗻𝘁𝗲𝗻𝘁 – 𝗔 𝗴𝘂𝗶𝗱𝗲 𝗳𝗼𝗿 𝗯𝘂𝘀𝗶𝗻𝗲𝘀𝘀. https://lnkd.in/gpBUmeCX Coming back, I saw the level of uptake, discussion, and genuine relief from the community, and I want to say how pleased I am with CSIRO’s Data61 contribution to this work.

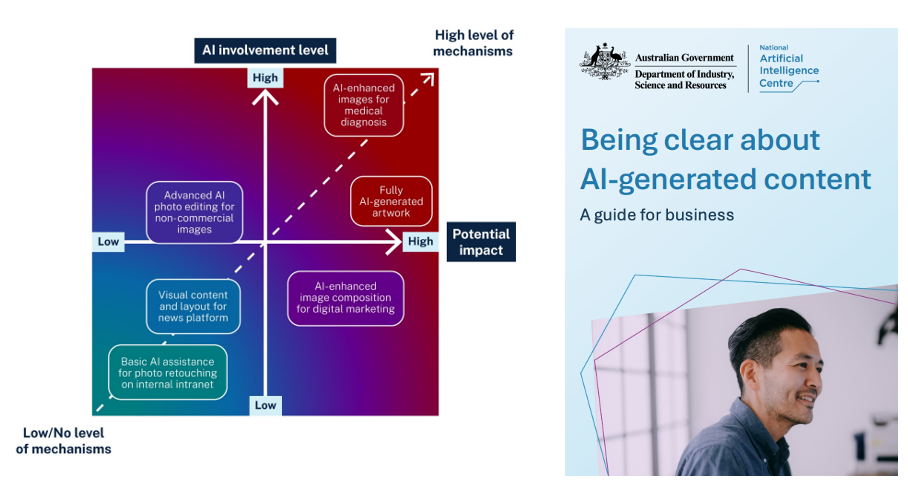

Beyond being a very practical guide with clear, role specific actions, there is one design choice I think is worth highlighting. Most guidance on AI labelling/watermarking and disclosure defaults to a classic risk formula: estimate likelihood, estimate impact, then combine them. In practice, we consistently see problems. Likelihood is interpreted very differently. Some people guess based on fast moving AI behaviour. Others assume any human in the loop drives likelihood close to zero. The result is inconsistency and false confidence.

Drawing on risk management literature and international practice in areas like the FDA and Australia’s TGA, the guide instead emphasises potential impact and AI involvement level. Asking how involved AI actually is in creating the content turns out to be far more concrete, assessable, and stable.

This framing also connects directly to our recent work on human oversight design. We released two papers, one on general human oversight design https://lnkd.in/g4HrtW4A and another focused on oversight in government decision making. https://lnkd.in/gxigvJme Both have been consistently ranked in the top ten most downloaded papers since release on the major international preprint archive, which tells us this is resonating well beyond Australia.

We continue to work in this area because we believe the future of human oversight design is inseparable from the future of work and from humanity’s role in an AI-rich world. As AI systems take on more generative and decision-shaping tasks, the human role increasingly shifts towards verification, judgement, and accountability rather than direct production. Over January, we will be releasing further work on how to understand the nature of “verification”, verify outcomes, whether by AI or humans, and how to preserve meaningful human agency, both at work and in everyday life.

About Me

Director/Head of CSIRO’s Data61

Conjoint Professor, CSE UNSW

For other roles, see LinkedIn & Professional activities.

If you’d like to invite me to give a talk, please see here & email liming.zhu@data61.csiro.au